COMPUTERS OF THE FUTURE: Intelligent Machines and Virtual Reality - 1. From Abacus to Supercomputer

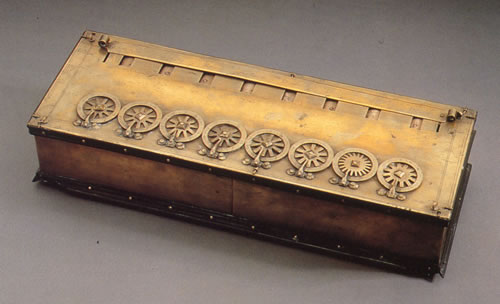

Figure 1. The Pascaline was one of the earliest calculating machines.

Figure 2. Engineers at the Science Museum in London produced a working version of the Analytical Engine to Charles Babbage's original specifications.

Figure 3. The Mark I computer at Manchester University.

Figure 4. This tiny memory chip from a modern computer fits easily on the tip of a child's finger.

Ever since people began to live in towns and cities, thousands of years ago, they had to deal with numbers. Merchants, for instance, had to figure out the value of the goods they bought and sold. With the need to do many more calculations, came the need to make working with numbers easier. This led to the invention of the first calculators.

As long ago as 3000 BC, the inhabitants of Babylon (present-day Iraq) performed addition and subtraction by moving counters around on lines traced on the ground. Later, the lines, which represented units, tens, hundreds, and so on, were drawn on dust-covered boards. From this practice came the name abacus, for the simplest of counting devices, since abakos in ancient Greek "board." Among the earliest counters were pebbles, and our word calculate is derived from calculus, the Latin for "pebble."

Eventually, the kind of abacus with which we are most familiar was developed. It consists of beads strung on wires or rods that are held within a frame. This device is still used by shopkeepers and school children in parts of the Far East.

Mechanical Calculators

In seventeenth century Europe, more complicated calculating machines started to appear. One of the most famous was the Pascaline, invented in 1642 by the brilliant French mathematician Blaise Pascal (see Figure 1). An even earlier calculating machine was devised by a little-known German, William Schikard, in 1623. People used Schikard's Calculating Clock to add, subtract, and multiply by manipulating a clever assembly of interlocking gears and levers.

In 1822, an Englishman, Charles Babbage, designed an extraordinary calculating machine that he called the Difference Engine. Babbage spent over 10 years and a small fortune trying to turn the design into a practical device, but it proved too much of a challenge for the engineering tools of his time. Only a small part of it was made. Though that part worked perfectly, Babbage had already drawn up plans for an even more ambitious machine known as the Analytical Engine (see Figure 2). It would have been the size and weight of a small locomotive and, given enough time, could have tackled any calculation. Again, it proved to be too expensive and difficult to build. But had it been constructed, the Analytical Engine would have been the world's first true computer.

The Information Machine

A computer is a machine that processes information. In other words, it works on facts and figures, known as DATA, and produces useful results. The step-by-step instructions that tell the computer exactly what to do with the data make up a PROGRAM. A true computer must be able to store both the data it is working on and the program that tells it what to do. This means that, in addition to a processing section, a computer must also have a storage section, or MEMORY. Babbage's Analytical Engine was intended to have both a processor and a memory in the form of an ingenious arrangement of axles and toothed wheels. Even if it had been built, however, Babbage's computer would have been slow since its parts were mechanical.

The next leap forward in computing had to wait until electricity could be harnessed to run machines, allowing computer parts to operate much faster. One such machine, designed by Herman Hollerith, an American, helped to count and sort information on punched cards for the United States Census of 1890.

Not until the 1940s, however, did the age of modern computing really begin. That decade saw the construction of the first completely electronic information processors, which could do hundreds or even thousands of calculations every second. One of these devices, called Colossus, allowed British scientists to decode top-secret German messages through World War II. Meanwhile, in the United States, an even more powerful electronic processor, known as ENIAC, was also enlisted in the war effort. ENIAC was built, in 1946, at the University of Pennsylvania to calculate the range of artillery shells fired from large guns. However, despite the great calculating power of ENIAC and Colossus, neither could be classed as a true computer because each one lacked a memory. Instead, the title World's First Computer rightly belongs to another device – the Mark I, which was built at Manchester University, England (see Figure 3). On June 21, 1948, the Mark I solved a problem for the first time using a stored program and data.

Speedy Developments

The earliest electronic computers were very large, expensive to build, and awkward to use. They filled whole rooms, needed teams of experts to operate and maintain them, and often broke down because the components from which they were made had fairly short life spans. Nevertheless, they proved to be invaluable assets during World War II. In fact, the needs of war helped to spur the development of computers, so it is hardly surprising that at first they were used mainly for military work.

Experts soon realized, however, that computers could be turned to many other tasks simply by changing the programs and data that were fed to them. As a result, by the mid-1950s computers were being used in industry and business. Given suitable programs, they could do everything from analyzing structural designs to calculating company payrolls to playing chess. Yet their size and the costs of their construction and operation threatened the expansion of their use.

The limitations of cost and size were largely caused by the thousands of bulky, power-thirsty components known as triodes, or vacuum tubes, that were used to build the early computers. But in 1948, three American physicists invented a device called a TRANSISTOR, which eventually replaced the triode in most electronic devices – including computers. Transistors, and the computers that used them, were smaller, worked faster, used less power, were more reliable, and could be made more cheaply than the triodes and triode-based computers.

In 1959, engineers learned how to make several tiny transistors on one small crystal, or CHIP, of a substance known as SILICON. Soon, more and more transistors were being squeezed onto silicon chips, each chip only about one quarter of an inch square (see Figure 4). As a result, computers could be made smaller while at the same time they could perform more tasks faster.

Eventually, it became possible to put the entire processing section of a small computer onto a single chip, known as a MICROPROCESSOR. A handful of other chips could be made to serve as a memory for holding programs and data. By the late 1970s, thanks to microchip technology, manufacturers were able to produce computers of such small size and low cost that they could be purchased for use in the home.

A Tool for Our Time

About 60 years ago there were no computers as we known them. Now the world seems to be full of them. This computer revolution has been made possible by rapid developments in the machinery of computers, or the HARDWARE, and in the programs, or the SOFTWARE, which tell computers what to do.

Today, we are unaware of most of the computers that surround us, and the many functions they perform. These "invisible" computers are tucked away on silicon chips the size of a fingernail inside washing machines, automobile engines, and a great many other ordinary devices. Every time we pick up a phone or fly in an airplane, we depend on computers. Schools, libraries, transport systems, police departments, hospitals, and businesses now rely on computers to carry out a huge variety of jobs – from keeping records to saving lives. Some computers that serve important medical functions are so small they can be placed inside the human body. Other computers, called supercomputers, are much larger and incredibly powerful; they can do over a thousand trillion calculations in just one second. An average person working non-stop day and night would require more than 30 million years to do this many calculations!

As well as handling numbers, computers can manipulate pictures, sounds, and text in whatever form we wish. Thus computers allow us to think, imagine, and express ourselves in an astonishing variety of new ways.

Computers have already had a strong influence – both good and bad – on modern society. And the computer age is still very young. Over the next 50 years, the world will be further transformed, perhaps not always for the better, as computers are used in more and more aspects of our lives.

| PLATO Spots the Poison Plants | ||

|---|---|---|

|