X-ray tube

An X-ray tube is a vacuum tube containing electrodes that accelerates electrons and directs them to a metal anode, where their impacts produce X-rays.

Development of the X-ray tube

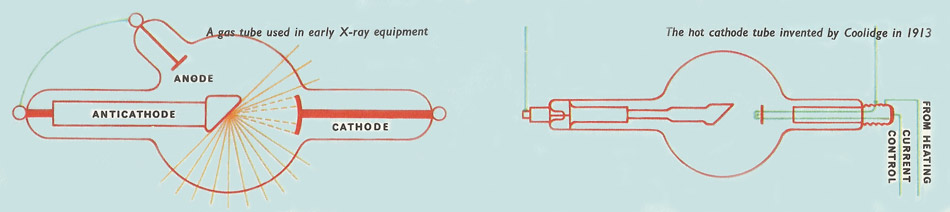

Following Wilhem Röntgen's discovery of X-rays in 1895, applications of the new rays were so immediately apparent, particularly in diagnostic medicine, that people all over the world began to build and use X-ray equipment. In those early days the tubes that were used were known as gas tubes, for their successful operation depended on the small quantity of air that was intentionally left in the tubes when they were evacuated. Each tube contained an anode and a cathode, but, in addition, there was a third component known as an anticathode, which was placed immediately opposite the cathode so that all the cathode rays impinged directly on it instead of on the wall. It was, in fact, the X-ray source. As such it protected the tube walls from the damaging effects of cathode-ray bombardment.

Although these early gas tubes worked, they were never very satisfactory for they deteriorated rapidly with use. Furthermore, the intensity of the X-ray beams which they produced was so low that inconveniently long exposures were needed to produce a good X-ray picture, or radiograph. In 1913 a great advance was made when W. D. Coolidge invented the hot cathode tube. In this the cathode was a spiral wire heated to incandescence by a small electric current as in an electric-light bulb. As well as carrying the heating current, however, the spiral was also raised to a high negative potential. As a consequence the electrons that it produced due to the high temperature passed as a stream of cathode rays to the positively charged anticathode at the other end of the tube. On hitting the anticathode they caused the emission of X-rays just as they did in the gas tube. The advantage of the hot cathode was that it had a much longer working life, and its output of X-rays could be controlled by adjustments made to the heating element current and to the potential difference between the cathode and the anticathode.